Architecture#

Asya is a Kubernetes-native async actor mesh. All components are designed around one principle: the message knows the way.

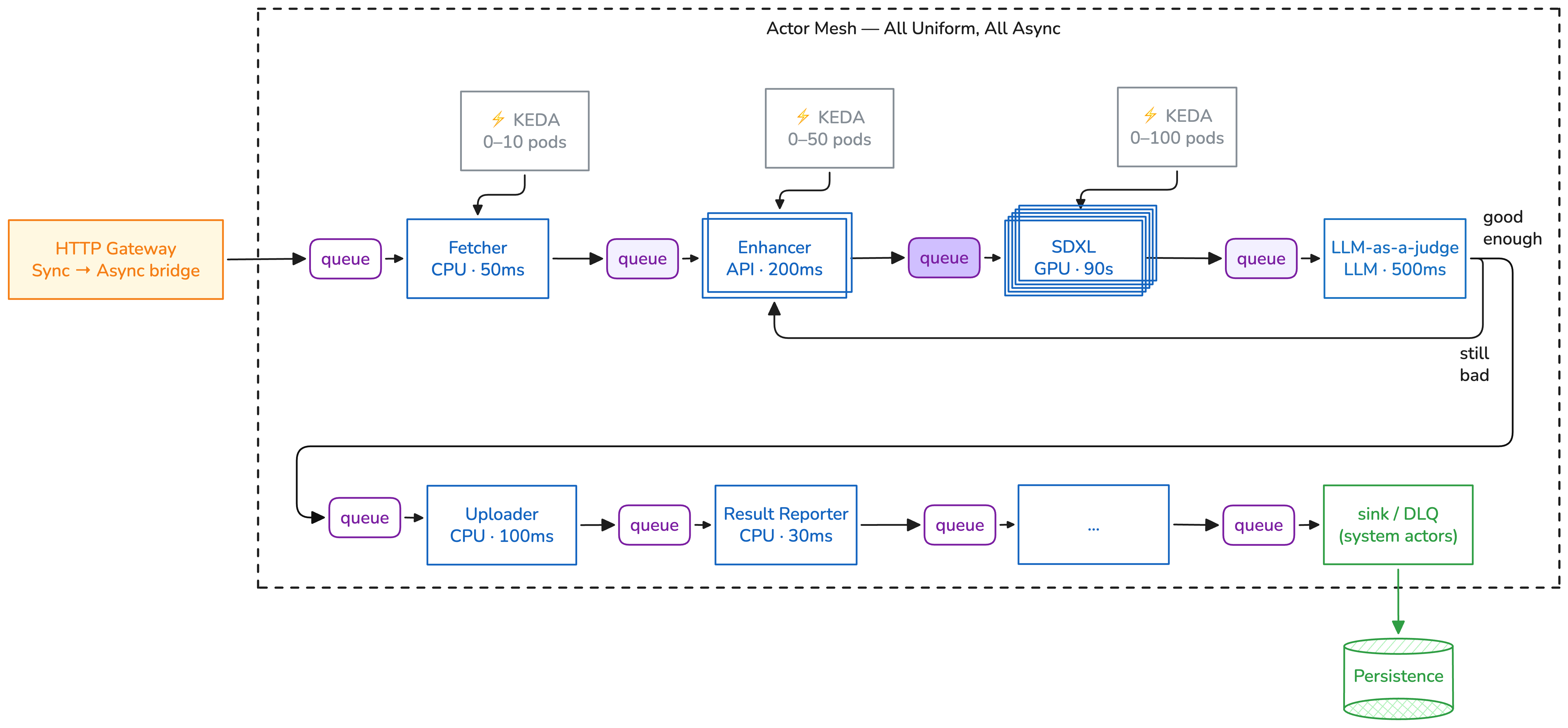

Actor Mesh#

Actors communicate through queues. Each message carries its own route. Each actor scales independently via KEDA.

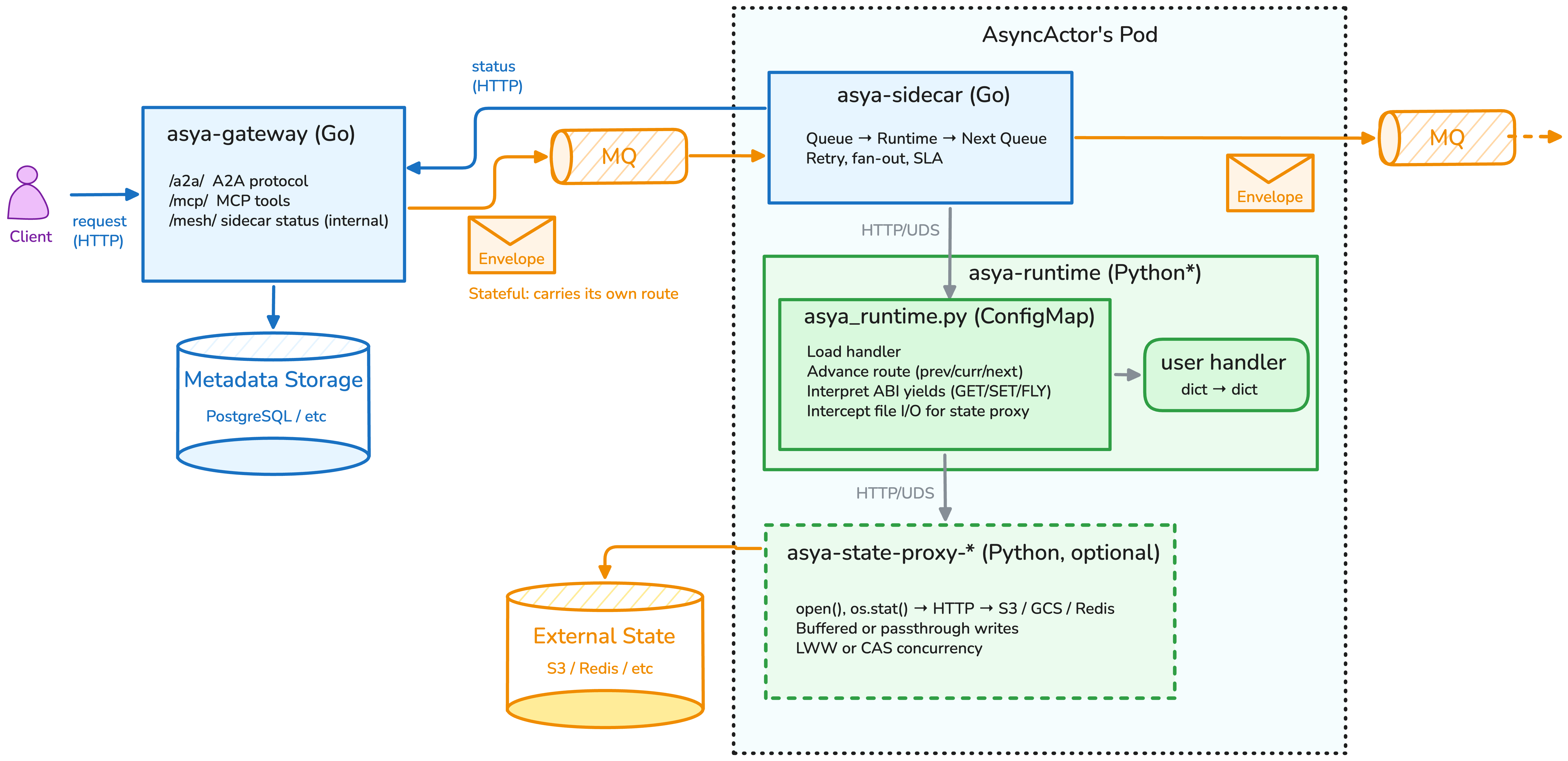

Actor Pod Anatomy#

Every actor pod has a sidecar (Go) for queue polling and routing, a runtime (Python) for your handler, and an optional state proxy for virtual persistent memory.

Core Components#

Actor Pod (your workload)#

Each actor pod has two injected containers:

- Sidecar (Go) — queue polling, envelope routing, retries, progress reporting, metrics

- Runtime (Python) — loads your handler, executes per envelope, communicates via Unix socket

Your handler sees only dict -> dict. The envelope structure, queue mechanics, and

routing are invisible to it.

Gateway (optional)#

The gateway bridges synchronous HTTP with the async mesh:

| Mode | Audience | Endpoints |

|---|---|---|

| api | External clients | /a2a/ (agents), /mcp (tools), /.well-known/agent.json |

| mesh | Sidecars (internal) | /mesh (progress, FLY events, final results) |

Both modes share a database (PostgreSQL) for task state. The gateway translates between HTTP request/response and the fire-and-forget queue-based mesh.

Crossplane Compositions#

Crossplane manages the declarative lifecycle of actors. Each AsyncActor CRD creates:

- Message queue (SQS, RabbitMQ, or Pub/Sub)

- Deployment with sidecar + runtime containers rendered inline

- KEDA ScaledObject watching queue depth

Deleting an AsyncActor cascades to all three. No orphaned queues.

Crew (system actors)#

Crew actors handle framework-level concerns:

| Actor | Role |

|---|---|

x-sink |

Persists successful results, reports completion to gateway |

x-sump |

Terminal error collection, metrics, DLQ |

x-pause |

Checkpoints envelope to S3 for human-in-the-loop |

x-resume |

Restores checkpointed envelope, re-injects into mesh |

State Proxy (optional)#

The state proxy gives actors virtual persistent

memory. Actors read/write /state/... paths using standard file I/O — the proxy

translates to S3, GCS, Redis, or NATS KV.

Flow Compiler (build-time tool)#

The flow compiler transforms Python control

flow (if/else, while, asyncio.gather) into flat actor execution graphs. Purely

additive — generates standard AsyncActor manifests.

Message Flow#

- Client sends HTTP request to Gateway (or enqueues directly)

- Gateway creates task, injects envelope into first actor's queue

- Sidecar consumes envelope from queue

- Sidecar forwards payload to Runtime via Unix socket

- Runtime executes your handler, returns result

- Sidecar advances the route, sends envelope to next actor's queue

- Repeat 3-6 for each actor in

route.next - x-sink persists final result, reports status to gateway

- Gateway delivers result to client (blocking wait or SSE stream)

The core loop: Queue -> Sidecar -> Your Code -> Sidecar -> Next Queue

Deployment Patterns#

AWS (SQS + S3)#

- Crossplane creates SQS queues via AWS Provider

- IAM via IRSA or Pod Identity for queue/S3 access

- Results stored in S3

- KEDA scales via SQS queue depth metrics

- See: AWS EKS Guide

GCP (Pub/Sub + GCS)#

- Crossplane creates Pub/Sub subscriptions via GCP Provider

- IAM via Workload Identity for Pub/Sub/GCS access

- Results stored in GCS

- KEDA scales via Pub/Sub subscription metrics

- See: GCP GKE Guide

Local / Self-hosted (RabbitMQ + MinIO)#

- RabbitMQ or LocalStack SQS for transport

- MinIO (S3-compatible) for storage

- Username/password auth from K8s secrets

- See: Quickstart

Protocols#

- Envelope — message structure, routing (

prev/curr/next), status - Sidecar-Runtime — Unix socket framing, error handling

- Gateway API — A2A, MCP, mesh HTTP endpoints

- ABI Protocol — generator yield forms (GET, SET, FLY)

- AsyncActor CRD — full manifest reference

- Flow DSL — flow compilation semantics